Code to reproduce results: github.com/shreyassharma1/prompt-priming-blog

The Problem

While working on a project analyzing cognitive patterns in reasoning traces, I faced an issue where one of my configurations yielded no chain of thought reasoning or trace lengthening even after 256 steps of GRPO. This specific configuration was training Llama-3.1 8B Instruct on the reasoning task Countdown from ReasoningGym (Stojanovski et al., 2025). Countdown is a task where given a list of N numbers and a target value, you need to find an arithmetic expression using all numbers exactly once that evaluates to the target. What makes Countdown a good testbed for reasoning is that it benefits from search: trying candidate expressions, verifying them, and backtracking when they don't work. In principle, RL should be able to teach a model to allocate more inference-time compute to this kind of exploration. That was the hope, at least.

Here's an example task of Countdown from ReasoningGym's implementation:

Question: Calculate 139 using all of these numbers: 36, 29, 95, 32, 4, 15. Each number may be used at most once.

Answer: 15 - 4 + 95 + 36 - 32 + 29

Training Setup

Before describing what went wrong and the fix, here's the training configuration:

| Setting | Value |

|---|---|

| Model | meta-llama/Llama-3.1-8B-Instruct with LoRA (rank 32) |

| Algorithm | GRPO |

| Group size | 8 rollouts per problem, 64 problems per batch |

| Training set | 16,384 problems (256 GRPO steps at 64 problems/step) |

| Learning rate | 1e-5 |

| Max generation length | 1,024 tokens |

| Temperature | 1.0 |

The system prompt I was using to encourage chain-of-thought and inference-time scaling was:

"Reason through each problem step by step before giving the final answer. State the final answer clearly at the end."

I used Tinker (Thinking Machines Lab, 2025) for all my training and inference.

What Didn't Work

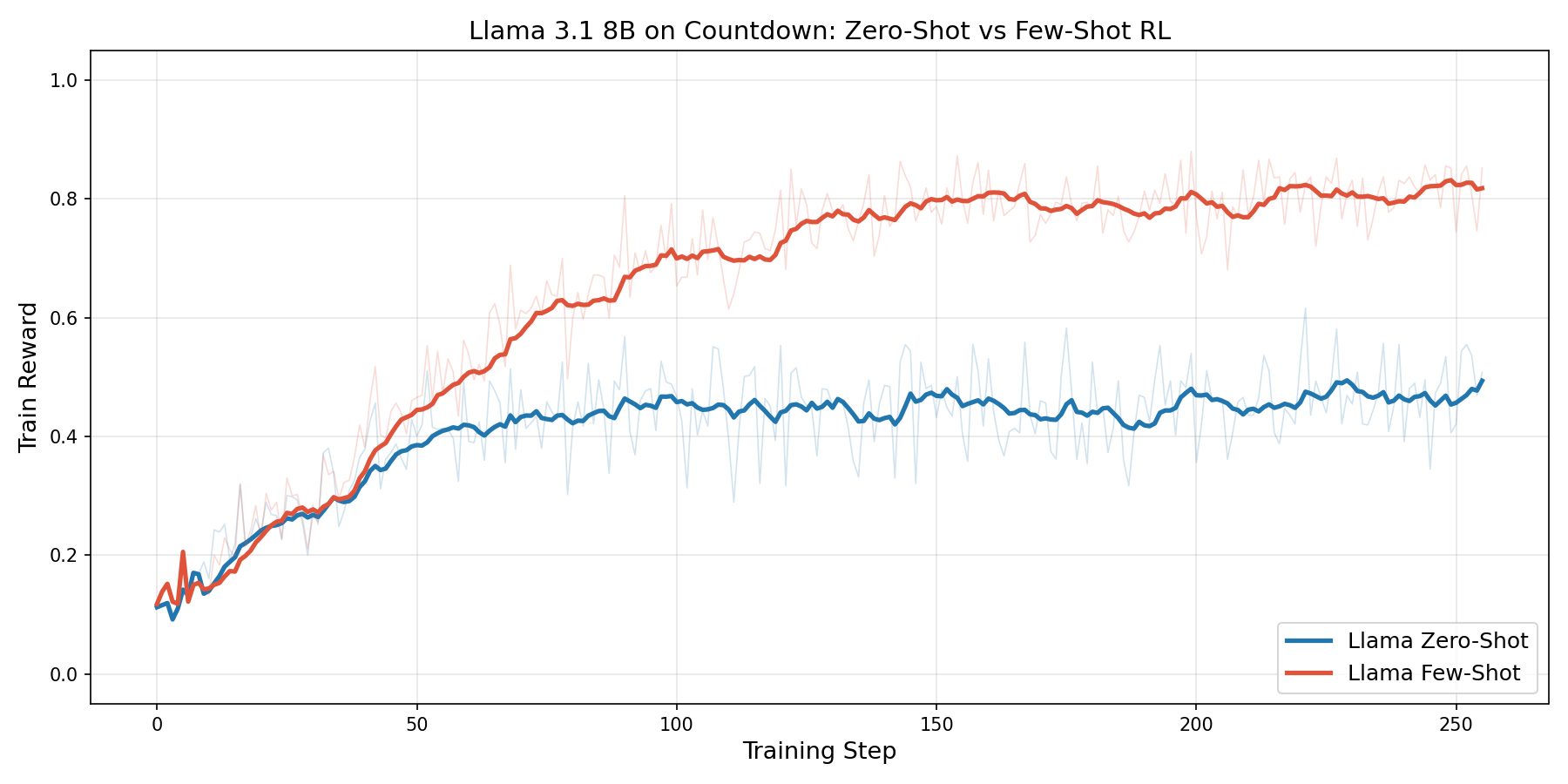

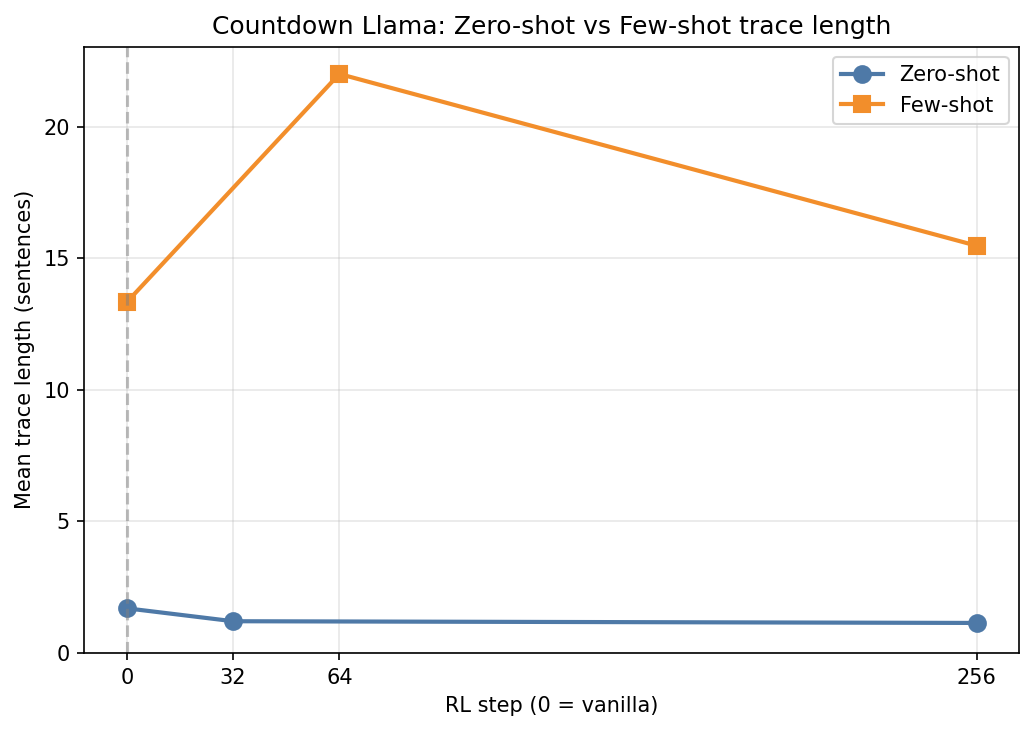

In the zero-shot configuration (system prompt plus task, no examples) the model quickly converged to short, formulaic outputs. Mean trace length stayed under two sentences throughout training, and reward plateaued around 0.4. The model was essentially guessing single expressions without any search process.

I tried several variations of "think step by step" style instructions in the system prompt. None of them changed the outcome in a meaningful way. The model remained stuck in the same low-effort regime.

What Worked

Finally, I tried inserting an example question, reasoning trace, and answer into the prompt. The reasoning trace was fairly short and simple — it was only 5 sentences long and consisted of trying one solution, checking it, realizing it was wrong, trying an alternate solution, and realizing it was correct. Here's the exact example I added to the prompt, alongside its corresponding question:

I need to make 150 from 25, 3, and 75. Let me try: 75 + 25 = 100. That leaves 3. 100 * 3 = 300, too big. What about 25 * 3 = 75, then 75 + 75 = 150. That works! 25 * 3 + 75.

Surprisingly, this worked quite well! Mean trace lengths went from ~13 sentences at step 0, to ~22 sentences at step 64, and finally settled at ~15.5 sentences at the final step, 256. Interestingly, all of these sentence counts are larger than the one in the example (6), and the length increases as more RL is applied. This was enough to break the reward plateau of 0.4, achieving a final reward of ~0.8 after training for the same number of steps.

Qualitative Analysis

So what do these traces look like?

Well, they look quite similar to the example in direction. Most of the traces consist of the same cognitive template: propose, verify, backtrack, retry — but they scale the depth of the search far beyond what was demonstrated. The example has a search depth of 1 (try, fail, succeed). The model learns to extend this into much deeper search trees, and RL progressively makes that search more efficient and productive.

Here's a trace from a partially trained checkpoint:

"I need to make 658 from 98, 28, and 20. Let me try: 98 + 28 + 20 = 146, too small. What about 98 * 28 = 2744, too big. I need a way to subtract. 98 - 28 = 70, then 70 + 20 = 90, still too small. [~30 more lines of trial-and-error] ...98 + 20 * 28 = 98 + 560 = 658. That works! 98 + 20 * 28"

And from a fully trained checkpoint:

"I need to make 658 from 98, 28, and 20. Let me try: 98 + 28 + 20 = 146, too small. What about 98 * 28 - 20 = 98 * 28 - 20 = 2744 - 20 = 2724, too big. What about 98 + 28 * 20 = 98 + 560 = 658. That works! 98 + 28 * 20"

The partially trained model searches at depth >30 before finding the answer. The fully trained model finds it at depth 2. Both are deeper than the example's depth of 1, but RL has compressed the search into something far more directed.

What Happens When You Remove the Example at Test-Time?

A natural question is whether the model has internalized this reasoning pattern, or whether it only reproduces it because the example is in context. To test this, I evaluated Llama-3.1-8B-Instruct on 50 Countdown problems under three settings:

| Setting | Train Prompt | Test Prompt | Accuracy |

|---|---|---|---|

| 1 | One-shot | One-shot | 80% |

| 2 | One-shot | Zero-shot | 58% |

| 3 | Zero-shot | Zero-shot | 46% |

Removing the example at test time (setting 2 vs. 1) does hurt performance, but the model still substantially outperforms the zero-shot baseline (setting 3). The reasoning pattern has been partially internalized through RL training.

What's particularly interesting is the qualitative difference between settings 1 and 2. In setting 1, traces are fairly overfit to the example's wording — the model uses the same phrases ("Let me try," "too big," "That works!") almost verbatim:

Setting 1: I need to make 190 from 54, 51, 79, and 6. Let me try: 54 + 51 + 79 - 6 = 178, too low. What about 79 + 54 + 51 + 6 = 190. That works!

In setting 2, when the example is absent, the high-level cognitive template — generate candidates, verify, iterate — persists, but the surface-level phrasing is much more diverse:

Setting 2: To calculate 124 using the given numbers, I will try different combinations:

1. 33 + 57 + 62 - 38 = 114 (close, but not 124)

2. 33 + 62 + 38 - 57 = 72 (not 124)

3. 57 + 62 + 38 - 33 = 124 (found it!)Final answer: 57 + 62 + 38 - 33.

Why This Matters

The core takeaway is that prompts can prime cognitive behaviors that shape the trajectory of an RL run. The single example changed the space of behaviors that RL could explore and refine. Without the example, the model never discovered the generate-and-test strategy on its own within 256 steps. With it, the model adopted the strategy immediately and RL spent its budget making the search more efficient rather than discovering that search is useful in the first place.

This finding is in some ways similar to what Gandhi et al. (2025) find, except that the priming could occur via the prompt and not via fine-tuning.

There are several directions I'd like to explore from here. How sensitive is this effect to the quality or style of the example? Would multiple examples with distinct cognitive templates work better? Would RL learn to choose which to deploy when? Can prompt-priming transfer to other tasks? These are questions I'm looking forward to digging into.

References

Gandhi, K. et al. (2025). Cognitive behaviors that enable self-improving reasoners, or, four habits of highly effective STaRs. CoRR, abs/2503.01307. arxiv.org/abs/2503.01307.

Stojanovski, Z. et al. (2025). Reasoning Gym: Reasoning environments for reinforcement learning with verifiable rewards. In Advances in Neural Information Processing Systems, Datasets and Benchmarks Track. openreview.net/forum?id=GqYSunGmp7.

Thinking Machines Lab (2025). Tinker. thinkingmachines.ai/tinker.